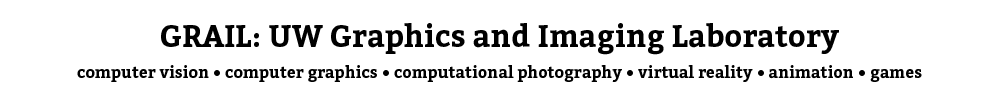

Transfiguring Portraits

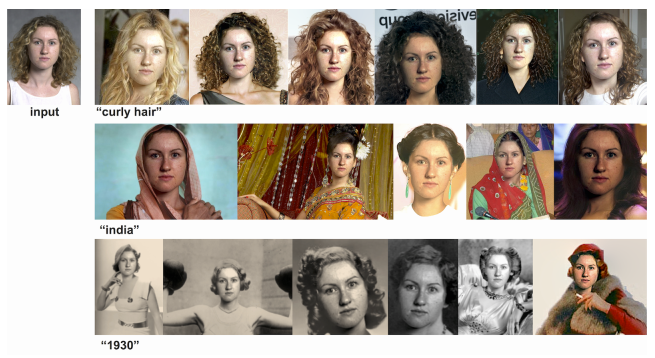

MegaFace

MegaFace is the largest face recognition benchmark in the world, it currently includes two big datasets and challenges: 1) 1 million unlabeled photos to test face recognition at scale, 2) 4.7 labeled photos that capture more than 690K identities that is suitable for training. MegaFace was created with the idea that face recognition should be tested at a million scale. Currently more than 500 groups world-wide are working with MegaFace. See also stories by the Atlantic, TechCrunch, and IEEE Spectrum.

Virtual Reality Capstone Class!

Ricardo Martin (and GRAIL) in the Seattle Times!

PhD student Ricardo Martin-Brualla explains his presentation, "Time-lapse Mining from Digital Photos," which discusses his technology for creating time-lapses of locations over several years using community photo collections, during the Computer Science and Engineering program's annual Industry Affiliates Meeting at the Paul G. Allen Center for Computer Science and Engineering at the University of Washington on Tuesday, Oct. 20, 2015. Using his automated approach, which clusters 86 million photos from a several year timespan into specific landmark locations, then sorting them into those with a common viewpoint, Martin-Brualla has rendered 10,768 time-lapses from 2,942 landmarks all over the world.

More information about Ricardo's work and the department can be found in the Seattle Times article.

"What Makes Tom Hanks Look Like Tom Hanks" wins Madrona Prize!

Supasorn Suwajanakorn is the recipient of this year's Madrona Prize at the CSE Industrial Affiliates Meeting, for his work (to be presented at ICCV 2015) with Ira Kemelmacher-Shlizerman and Steve Seitz titled "What Makes Tom Hanks Look Like Tom Hanks. The Madrona Prize is given to the research project that best combines exciting research with commercial potential.

This work has received press coverage, from MIT Review, Atlantic Magazine, Mashable, and Discovery News, and was awarded GeekWire's "Innovation of the Year" award.

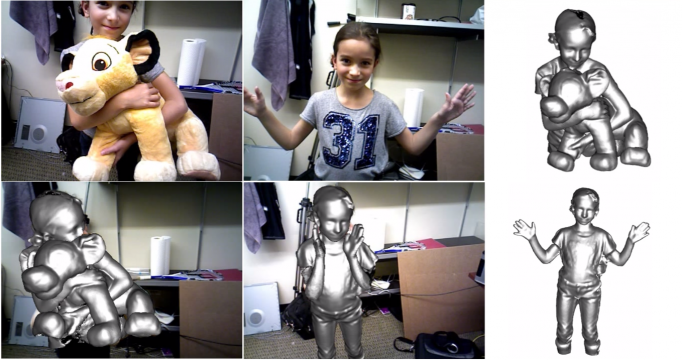

CVPR '15 Best Paper Award!

"DynamicFusion: Reconstruction and Tracking of Non-rigid Scenes in Real-Time," authored by Richard Newcombe, Dieter Fox, and Steven Seitz, won the Best Paper award at the CVPR 2015 conference. Congratulations!

Summer 2015 Google Faculty Research Awards

Professor Brian Curless was a recipient of one of this summer's Google Faculty Research Awards, in the area of "Physical Interactions and Immersive Experiences." Ph.D. alum and former Creative Director of the UW Center for Game Science, Seth Cooper, now at Northeastern University, was also a recipient, in the area of "Human-Computer Interaction."

Congratulations to Brian and Seth!

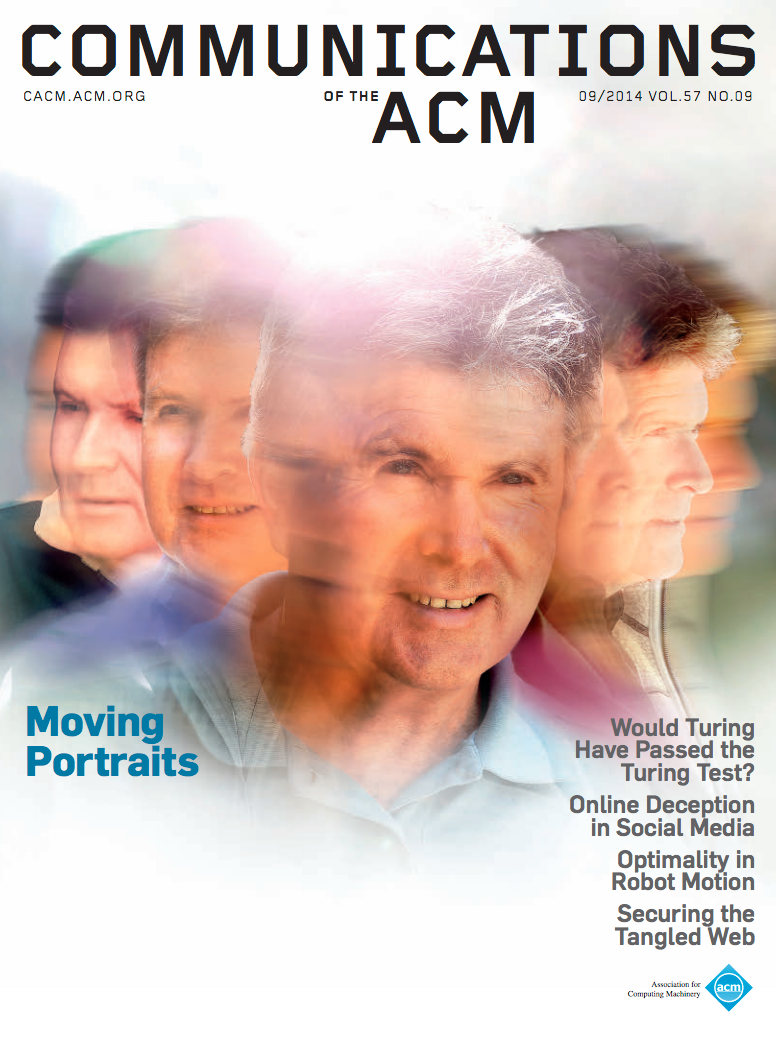

"Moving Portraits" Featured in CACM

"Moving Portraits" work by Ira Kemelmacher-Shlizerman, Eli Shechtman, Rahul Garg, and Steve Seitz was featured in the "Research Highlights" section of September 2014's Communications of the ACM, and selected for the cover of that publication.

Additional information about the article, including an interview with Ira, is available on the project's web site.

Aging in the News!

Our research on aging was presented at CVPR 2014 - "Illumination-Aware Age Progression," by Ira Kemelmacher-Shlizerman, Supasorn Suwajanakorn, and Steve Seitz - been picked up by news organizations far and wide, from local TV and radio - KOMO News, KING5, KIRO-TV, and KUOW (NPR) - to national and international coverage - NBC's "Today Show", Daily Mail (UK), and more!

More information on the research, and additional press coverage, is available at the project's web site.

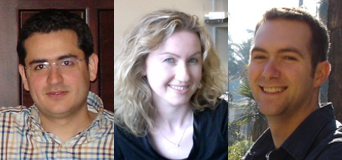

Three New GRAIL Faculty!

Ali Farhadi (left) is an expert on object recognition - he joins from CMU as an Assistant Professor.

Ira Kemelmacher-Shlizerman (middle) joins as an Assistant Professor, she is known for her work on human face modeling and is an expert in internet computer vision. She was previously a postdoc at UW.

Jeff Heer (left) focuses on visualization and HCI. He was previously an Assistant Professor at Stanford, and will join us in the fall of 2013 as an Associate Professor.

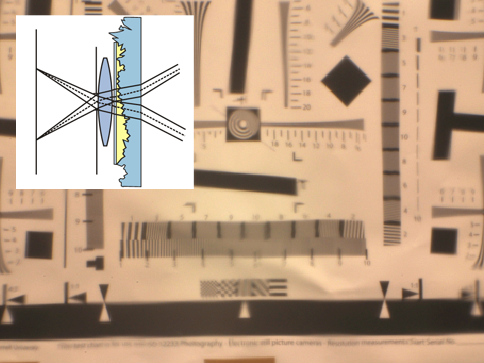

Vision Research in PBS NOVA episode

Recent work on seeing through obscure glass by Qi Shan, Brian Curless, and Tadayoshi Kohno was featured in a PBS "NOVA Science Now" episode on science and crime.

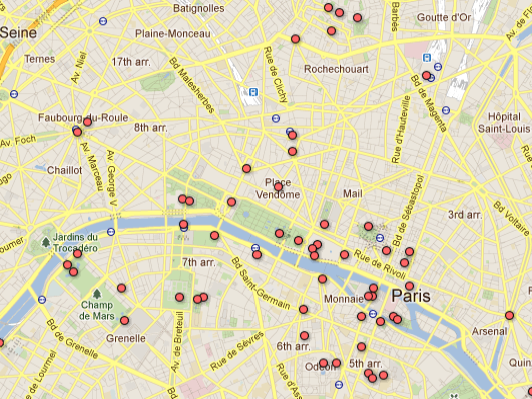

Photo Tours in Google Maps

The recently-released Photo Tours feature in Google Maps builds on the Photo Tours research undertaken by Avanish Kushal and Ben Self, and presented at 3DimPVT 2012.

"Photobios" Research in the News

Exploring Photobios, presented at SIGGRAPH 2011, is now also part of Google's Picasa "Face Movies" feature. In this video, Ira speaks with the local NBC affiliate about the research.

Intel Visual Computing Center

The GRAIL lab is part of the new Intel Visual Computing Center, focused on open, collaborative, and exploratory research in visual computing.

Intel CEO presents GRAIL's 3D reconstruction work

At the Intel Developer Forum in San Francisco, Intel CEO Paul Otellini demos how the company's processors are being used to render a 3D model from millions of user-generated images taken from photo-sharing sites like Flicker and Picasa. The work is being done at the University of Washington, where researchers have crowd-sourced images from the Web and created 3D re-construction of St. Peter's Basilica in Vatican City.

"Catch and Release" at SIFF!

The latest animated short from the department's Animation Capstone course, Catch and Release was screened at the Seattle International Film Festival (SIFF).

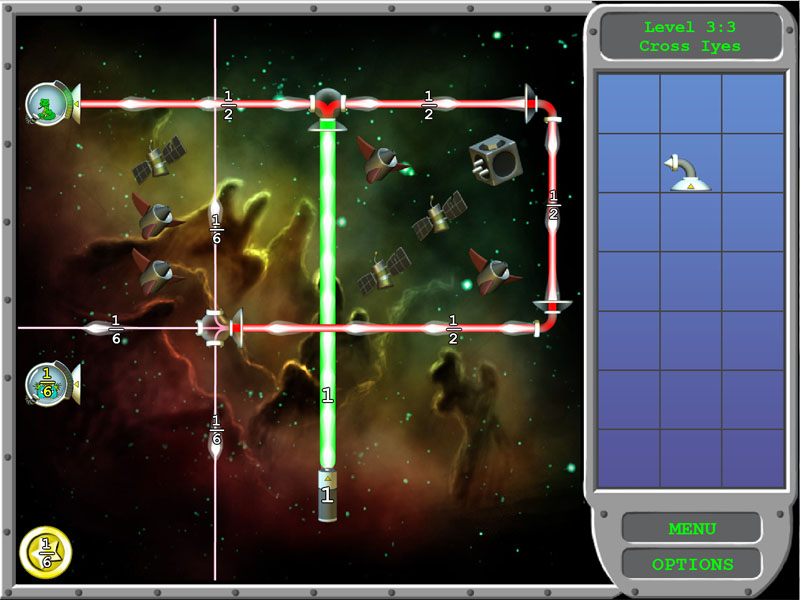

"Refraction" puzzle game wins prizes!

Refraction, an online puzzle game for teaching fractions through gameplay, has won both the 2011 NHK Japan Prize and the Grand Prize in the 2010 Disney Research Learning Challenge.

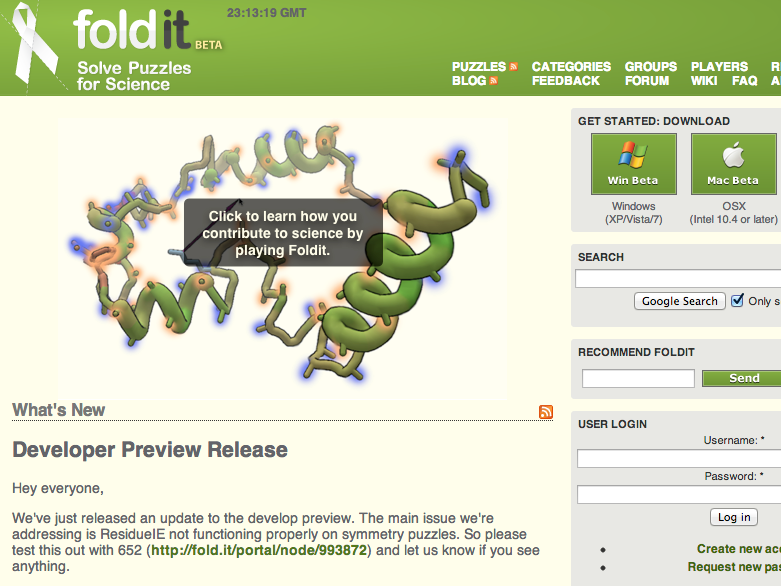

Foldit: Solving Protein Puzzles for Science

Foldit is an on-line 3D game developed by the Center for Game Science, in collaboration with researchers in the UW Department of Biochemistry, where players can use different tools to interactively twist, jiggle and reshape proteins. In 2011, a group of Foldit players discovered the structure of a protein that could be used to help fight HIV and AIDS.