Manhattan-World Stereo

|

Abstract

Multi-view stereo (MVS)

algorithms now produce reconstructions that rival laser range scanner

accuracy. However, stereo algorithms require textured surfaces, and

therefore work poorly for many architectural scenes (e.g., building

interiors with textureless, painted alls). This paper presents a novel

MVS approach to overcome these limitations for Manhattan World

scenes, i.e., scenes that consists of piece-wise planar surfaces with

dominant directions. Given a set of calibrated photographs, we first

reconstruct textured regions using an existing MVS algorithm, then

extract dominant plane directions, generate plane hypotheses, and

recover per-view depth maps using Markov random fields. We have tested

our algorithm on several datasets ranging from office interiors to

outdoor buildings, and demonstrate results that outperform the current

state of the art for such texture-poor scenes.

Paper|

Yasutaka Furukawa, Brian Curless,

Steven M. Seitz, and Richard Szeliski. Manhattan-World Stereo CVPR 2009 |

|

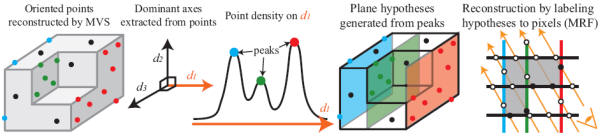

Algorithm overview

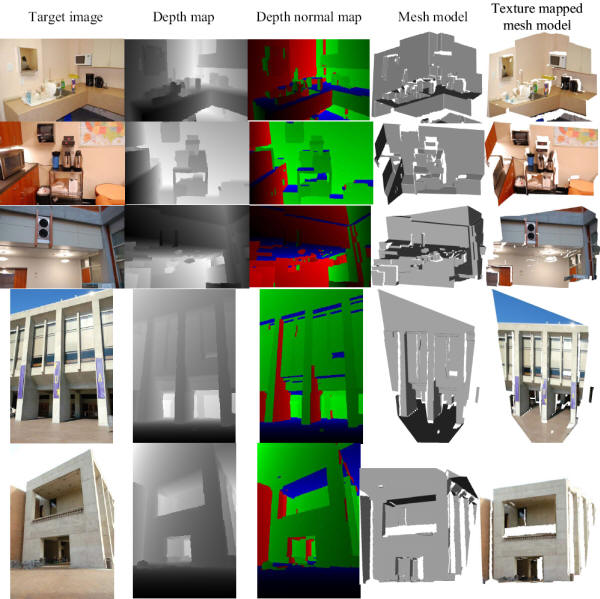

Reconstructed depth maps (per-pixel

plane information)

Video (comparison with a

state-of-the-art multi-view stereo algorithm)Acknowledgments

This work was supported in

part by National Science Foundation grant IIS-0811878, the Office of

Naval Research, the University of Washington Animation Research Labs,

and Microsoft.

| Contact: Yasutaka Furukawa |

Last updated on 05/15/2009 |