Dense 3D Motion Capture for Human Faces

|

Abstract

This paper proposes a novel

approach to motion capture from multiple, synchronized video streams,

specifically aimed at recording dense and accurate models of the

structure and motion of highly deformable surfaces such as skin, that

stretches, shrinks, and shears in the midst of normal facial

expressions. Solving this problem is a key step toward effective

performance capture for the entertainment industry, but progress so far

has been hampered by the lack of appropriate local motion and smoothness

models. The main technical contribution of this paper is a novel

approach to regularization adapted to nonrigid tangential deformations.

Concretely, we estimate the nonrigid deformation parameters at each

vertex of a surface mesh, smooth them over a local neighborhood for

robustness, and use them to regularize the tangential motion estimation.

To demonstrate the power of the proposed approach, we have integrated it

into our previous work for markerless motion capture [9], and compared

the performances of the original and new algorithms on three extremely

challenging face datasets that include highly nonrigid skin

deformations, wrinkles, and quickly changing expressions. Additional

experiments with a dataset featuring fast-moving cloth with complex and

evolving fold structures demonstrate that the adaptability of the

proposed regularization scheme to nonrigid tangential motion does not

hamper its robustness, since it successfully recovers the shape and

motion of the cloth without overfitting it despite the absence of

stretch or shear in this case.

Paper|

Yasutaka Furukawa and Jean Ponce Dense 3D Motion Capture for Human Faces CVPR 2009 |

|

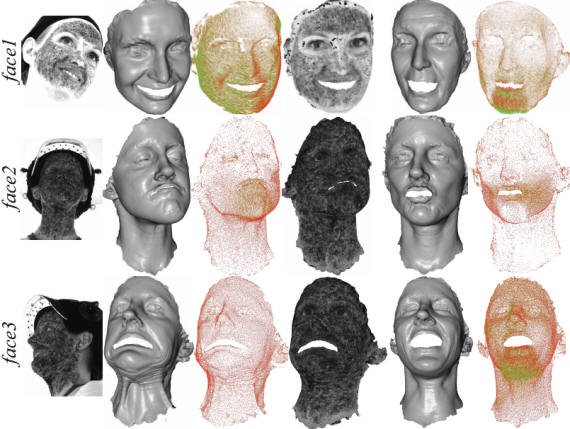

Reconstructed 3D structure and motion

VideoAcknowledgments

This work was supported in

part by the National Science Foundation grant IIS-0535152 and

IIS-0811878, the INRIA associated team Thetys, the Agence Nationale de

la Recherch under grants Hfibmr and Triangles, the Office of

Naval Research, the University of Washington Animation Research Labs,

and Microsoft. We thank R. White, K. Crane and D.A. Forsyth for the

pants dataset. We also thank Hiromi Ono, Doug Epps and ImageMovers

Digital for the face datasets.

| Contact: Yasutaka Furukawa |

Last updated on 05/15/2009 |