Environment Matting Extensions: Towards Higher Accuracy and Real-Time Capture

Yung-Yu Chuang1 Douglas Zongker1 Joel Hindorff1 Brian Curless1 David Salesin1,2 Richard Szeliski21University of Washington 2Microsoft Research

(a)

| ||||||

(b)

| ||||||

(c)

| ||||||

| ||||||

|

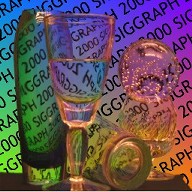

Comparisons between the composite results of the previously published algorithm, the higher accuracy environment matting technique described here,

and reference photographs of the matted objects in front of background images. Lighting in the room contributed a yellowish foreground color F that appears, e.g.,

around the rim of the pie tin in the bottom row. (a) A faceted crystal ball causes rainbowing due to prismatic dispersion, an effect successfully captured by the higher

accuracy technique since shifted Gaussian weighting functions are determined for each color channel. (b) Light both reflects off and refracts through the sides of

a glass. This bimodal contribution from the background causes catastrophic failure with the previous unimodal method, but is faithfully captured with the new

multi-modal method. (c) The weighting functions due to reflections from a roughly-textured pie tin are smooth and fairly broad. The new technique with Gaussian

illumination and weighting functions handles such smooth mappings successfully, while the previous technique based on square-wave illumination patterns and

rectangular weighting functions yields blocky artifacts.

| ||||||

|

|

|

| |||

| (a) | (b) | (c) | (d) | |||

|

Oriented weighting functions reflected from a pie tin. (a) The previous method yields blocky artifacts for smooth weighting functions. (b)

Using the higher accuracy method with unoriented Gaussians (\theta = 0) produces a smoother result. (c) Results improve significantly when we orient the Gaussians

and solve for \theta. In this case, \theta is about 25 degrees over most of the bottom surface (facing up) of the pie tin. (d) Reference photograph.

| ||||||

Towards Real-Time Capture

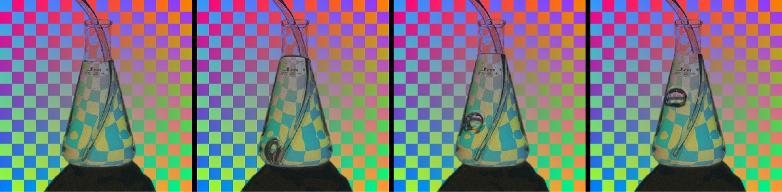

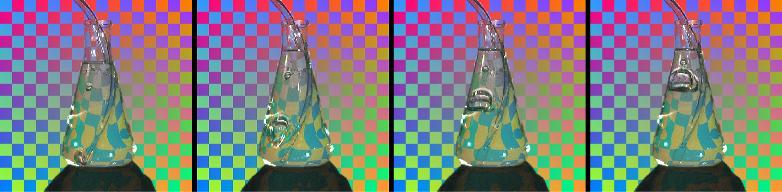

(a)

| |||

(b)

| |||

(c)

| |||

(d)

| |||

|

Sample frames from four environment matte video sequences. Rows (a) and (b) show bubbles being blown in an Ehrlenmeyer flask filled with glycerin,

while rows (c) and (d) show a glass being filled with water. Sequences (a) and (c) were captured with no lighting other than the backdrop, so that the foreground

color is zero. Sequences (b) and (d) were captured separately, shot with the lights on, and the foreground estimation technique is used to recover the highlights.

| |||

|

|

|

| 7.5MB AVI | 7.3MB AVI | 7.1MB AVI |